Pattern 10 (Block Task to Sub-Workflow Decomposition)

Description

The ability to pass data elements from a block task instance to the corresponding subprocess that defines its implementation. Any data elements that are available to a block task are able to be passed to (or be accessed) in the associated subprocess although only a specifically nominated subset of those data elements are actually passed to the subprocess.

Example

Customer claims data is passed to the Calculate Likely Tax Return block task whose implementation is defined by a series of tasks in the form of a subprocess. The customer claims data is passed to the subprocess and is visible to each of the tasks in the subprocess.

Motivation

In order for subprocesses to be used in an effective manner within a process model, a mechanism is required that allows data elements to be passed to them.

Overview

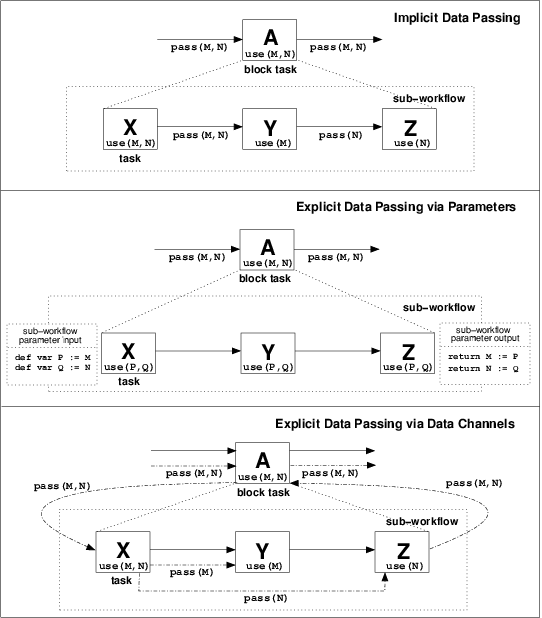

Most PAIS support the notion of composite or block tasks in some form. These are analogous to the programmatic notion of procedure calls and indicate a task whose implementation is described in further detail at another level of abstraction (typically elsewhere in the process design) using the same range of process constructs. The question that arises when data is passed to a block element is whether it is immediately accessible by all of the tasks that define its actual implementation or if some form of explicit data passing must occur between the block task and the subprocess. Typically one of three approaches is taken to handling the communication of parameters from a block task to the underlying implementation. Each of these is illustrated in Figure 11. The characteristics of each approach are as follows:

Figure 11: Approaches to data interaction from block tasks to corresponding sub-workflows

- Implicit data passing - data passed to the block task is immediately accessible to all sub-tasks which make up the underlying implementation. In effect the main block task and the corresponding subprocess share the same address space and no explicit data passing is necessary.

- Explicit data passing via parameters - data elements supplied to the block task must be specifically passed as parameters to the underlying subprocess implementation. The second example in Figure 11 illustrates how the specification of parameters can handle the mapping of data elements at block task level to data elements at subprocess level with distinct names. This capability provides a degree of independence in the naming of data elements between the block task and subprocess implementation and is particularly important in the situation where several block tasks share the same subprocess implementation.

- Explicit data passing via data channels - data elements supplied to the block task are specifically passed via data channels to all tasks in the subprocess that require access to them.

Context

There are no specific context conditions associated with this pattern.

Implementation

All of the approaches described above have been observed in the offerings examined. The first approach does not involve any actual data passing between block activity and implementation, rather the block level data elements are made accessible to the subprocess. This strategy is utlized by FLOWer, COSA and BPMN for data passing to subprocesses. In all cases, the subprocess is presented with the entire range of data elements utilised by the block task and there is no opportunity to limit the scope of items passed.

The third approach relies on the passing of data elements between tasks to communicate between block task and subprocess. Both Staffware and XPDL utilize this mechanism for passing data elements to subprocesses.

In contrast, the second approach necessitates the creation of new data elements at subprocess level to accept the incoming data values for the block activity. WebSphere MQ follows this strategy and sink and source nodes are used to pass data containers between the parent task and corresponding subprocess. iPlanet does so using parameters based on commonly named process attributes in the parent task and subprocess. BPMN and UML 2.0 ADs can also utilize this approach through InputPropertyMaps expressions (and OutputPropertyMaps where the data passing is from subprocess to parent task) and Parameters respectively.

Issues

One consideration that may arise where the explicit parameter passing approach is adopted is whether the data elements in the block task and the subprocess are independent of each other (i.e. whether they exist in independent address spaces). If they do not, then the potential exists for concurrency issues to arise as tasks executing in parallel update the same data element.

Solutions

This issue can arise with Staffware where subprocess data elements are passed as fields rather than parameters. The resolution to this issue is to map the input fields to fields with distinct names not used elsewhere during execution of the case.

Evaluation Criteria

An offering achieves full support if it has a construct that satisfies the description for the pattern. It achieves a partial support rating if it is not possible to limit the range of data elements which are accessible to the subprocess.

Product Evaluation

To achieve a + rating (direct support) or a +/- rating (partial support) the product should satisfy the corresponding evaluation criterion of the pattern. Otherwise a - rating (no support) is assigned.

Product/Language |

Version |

Score |

Motivation |

|---|---|---|---|

| Staffware | 9 | + | Indirectly supported via field mappings |

| Websphere MQ Workflow | 3.4 | + | Facilitated via data containers and source/sink nodes |

| FLOWer | 3.0 | +/- | Supported via implicit data passing between shared data elements but no ability to restrict the range of data elements passed |

| COSA | 4.2 | +/- | Supported by data sharing (implicit data passing) but no ability to limit the range of items passed |

| XPDL | 1.0 | + | The element "subflow" provides actual parameters which should be matched by the formal parameters of the corresponding workflow process. This is not defined out in detail, but the intention is clear. |

| BPEL4WS | 1.1 | - | Not supported |

| BPMN | 1.0 | + | i) Implicit data passing through global shared data is applied when a decomposition is realised via an Embedded Sub-Process. ii) Explicit data passing via parameters is applied when a decomposition is realised through an Independent Sub-Process. In such a case the data-transfer is defined through the Input - and OutputPropertyMaps Expression for the Sub-Process |

| UML | 2.0 | + | Supported by parameter passing between the block task and the decomposition |

| Oracle BPEL | 10.1.2 | - | Not supported |

| jBPM | 3.1.4 | - | jBPM does not support this pattern as any attempt for process decomposition defined through the GPD failed. |

| OpenWFE | 1.7.3 | + | OpenWFE supports data interaction to sub-workflow decomposition both through 1) global sheared data; and through 2) parameter passing. (These apply both for sub-processes defined within the main process and for sub-processes residing outside the file with the main process definition.) |

| Enhydra Shark | 2 | + | Enhydra Shark supports data interaction to sub-process decomposition. There are two ways to define a sub-process: through the introduction of a block activity or through the introduction of a sub-flow. A block activity is embedded within the main workflow definition and shares the same global data as its embedding workflow. A subflow is an independent process, which can be invoked by any workflow in the same package. Data is passed to it through parameters. |

Summary of Evaluation

+ Rating |

+/- Rating |

|---|---|

|

|